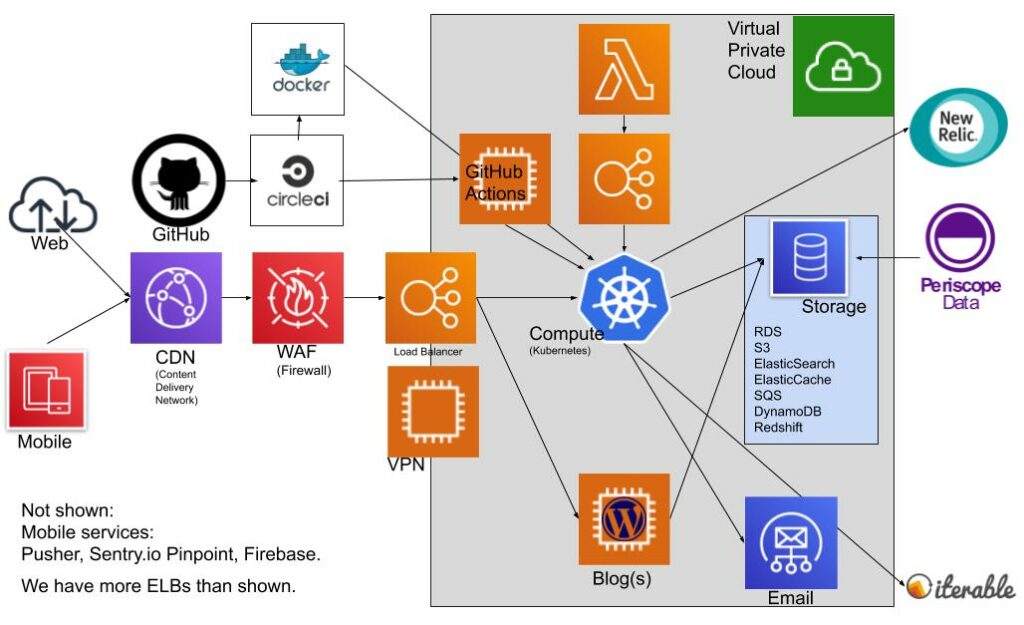

Our Tech Stack 2020

I last wrote about our tech stack 2 years ago. There hasn’t been a huge change. We’ve been moving slow and steady. Our migration from PHP/Yii to JavaScript/React is not yet complete but we’re slowly getting there. 3 years on and we’re 40% done. Migrating frameworks is a big task – don’t underestimate how hard it is! Due to our page-by-page migration approach (“the Strangler Pattern“) we used up all 100 path-routing rules allowed by our load-balancer. We were pleased to be able to submit a patch upstream to the AWS Applicaton Load-Balancer ingress controller, which allowed us to get back 50 rules: https://github.com/kubernetes-sigs/aws-alb-ingress-controller/pull/1162 .

We are very happy that we adopted Kubernetes. It’s a pleasure to run a cluster with auto-scaling and many self-healing aspects. Kubernetes sits at the center of everything:

We adopted Pod Security Policies to ensure Pods don’t run in “Privileged Mode” by default. The biggest teething trouble we had was with DNS. We were suffering from periodic DNS failures and timeouts. We eventually found that tweaking the DNS settings in every Pod to set ndots to 1 solved our issues. It reduces the number of DNS lookups enormously, read Marco Pracuccis post for all the details. Along the way, we also adopted a node-local dns cache. It makes sense that most DNS requests should be resolved locally without a network hop. It reduces the network clutter. It was a bit of a surprize to find adopting Kubernetes would require us to spend months debugging low-level DNS issues. We expected DNS to be a solved issue out of the box, especially for a managed server like EKS.

We love GitHub Actions. We use them to automatically apply changes to infrastructure. For example, if developers need to update a ENV var, they can commit the new value, a GitHub Action will pickup the change and kubectl apply the new configmap . Another example is that our build system (CircleCI), after tests pass successfully will build a new docker image, push it to DockerHub, then commit the new docker image name to the Kubernetes deployment manifest, triggering a GitHub Action to kubectl apply the Deployment and rollout the new version of the app. It’s not pure GitOps, but git is now the main source of truth. It’s comforting to have all our Kubernetes infrastructure and our Terraform infrastructure tracked in git.

Another GitOps project we did was to adopt “Sealed Secrets“. It allows us to commit an encrypted version of our secrets to git. A Kubernetes controller decryptes them to a normal Kubernetes Secret automatically. It’s a very convinient way to manage secrets.

We moved to Bottlerocket, an Operating System optimised for hosting Containers. The history of our Web server OS looks like this:

CentOS →2010→ Ubuntu →2016→ CoreOS →2018→ Amazon Linux 2 →2020→ Bottlerocket.

We loved CoreOS and only migrated away from it because it was End-of-Lived. Bottlerocket reminds me quite a bit of CoreOS. We want the minimum OS needed to run Kubernetes. We don’t need to SSH into our nodes so SSH access is disabled, which is comforting for security. The OS is a commodity now. I think of Kubernetes more as the OS than the actual OS on the VM. I had expected to migrate to Containers-As-A-Service by now and not have any Nodes to manage but the truth is, the Nodes are painless to manage under Kubernetes and Fargate is unattractive because it doesn’t support DaemonSets and doesn’t save us any money.

We retired MongoDB and instead use either ElasticSearch or Redis or DynamoDB depending on the use-cases. MongoDB was good and we probably would have kept using it if we had know that AWS was going to release “Amazon DocumentDB (with MongoDB Compatibility)” but we have cycles where we try to simplify our tech stack and MongoDB was caught in one of those cycles.

Going forwards, we don’t have any big infrastructure plans. Adopting a service mesh is on our radar, but we’re resisting until the benefits are undeniable. Our biggest plans are focused on improving search, and improving our technology related to better matching our Projects with our Freelancers. The future is about data science!

No Comment