AWS Lambda development pipeline

Overview

The past year, PeoplePerHour has entered the microservices arena, containerising its platform and separating it into services.

On the process of doing so, we realised how much we lacked message buses and long running daemons to process requests, either real time or in an asynchronous mode.

This is where AWS provided assistance by the use of its Aws Kinesis and Lambda products. We could now send asynchronous requests from our platform to kinesis which would eventually be executed by lambda. And we could do this in mass, without worrying about scaling and with an acceptable level of redundancy mechanisms built in.

This is where AWS provided assistance by the use of its Aws Kinesis and Lambda products. We could now send asynchronous requests from our platform to kinesis which would eventually be executed by lambda. And we could do this in mass, without worrying about scaling and with an acceptable level of redundancy mechanisms built in.

And so we entered the serverless era.

However lambda, despite being an amazingly powerful product, lacks basic tools to assist in 4 main sections:

- Development Pipeline: from Continuous Integration to Continuous Deployment

- Authentication: how to authenticate with other services by the use of secrets and keys without making them publicly accessible

- Local Development how to develop locally before deploying the lambda function to aws

- Monitoring and alarms: how to know when there is an issue in the running lambdas

Without the above mechanisms, running a production workload is definitely not recommended, so we therefore decided to build our own tools to work alongside these.

Development Pipeline

Our goal was to manage to have a proper Continuous Integration and Continuous Deployment mechanism for our lambdas.

We use nodejs to code our lambdas and mochajs to test them on our Continuous Integration tool, which happens to be circleci.

Once our lambda code is on github, circleci, which is integrated with github, will run the mocha tests and report back if they pass. If the green light is given (yes they passed!) then the code is merged, and the deployment mechanism kicks in!

Our deployment recipe has to decide which of the lambdas we run needs to be updated and push it to aws lambda in order to be updated.

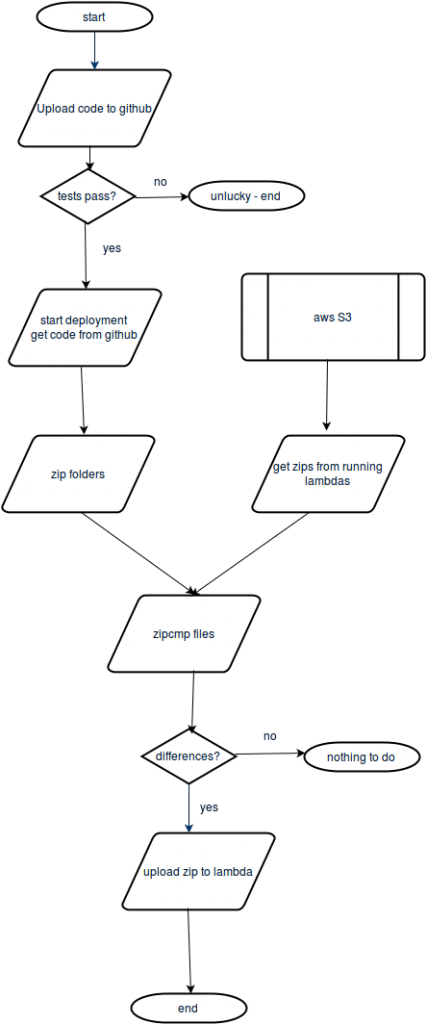

Diagramatically:

This mechanism step by step is described below:

- get from aws the existing lambda function that is running – this is usually a zip file in s3

- get from github the new code being uploaded and zip it

- use zipcmp, to compare the zipped github file with the file in s3, to see if a change has occurred

- if there is a change, then use the aws cli lambda update-function-code function to update the running lambda

For everyones use, this is our circle.yml file which is a nice compact overview of our cycle:

---

machine:

node:

version: 4.4.2

dependencies:

override:

- sudo pip install --upgrade awscli

- sudo apt-get install zipcmp --assume-yes

- |

mkdir ~/.aws/

echo "[default]" >> ~/.aws/config;

echo "region=us-east-1" >> ~/.aws/config;

echo "output=json" >> ~/.aws/config;

if [ "$CIRCLE_BRANCH" == "master" ]; then

export AWS_BUCKET=lambdas-master;

echo "AWS_ACCESS_KEY_ID=$ACCESS_KEY" >> ~/.aws/config;

echo "AWS_SECRET_KEY=$SECRET_KEY" >> ~/.aws/config;

fi;

if [ "$CIRCLE_BRANCH" == "develop" ]; then

export AWS_BUCKET=lambdas-next;

echo "AWS_ACCESS_KEY_ID=$DEVELOP_ACCESS_KEY" >> ~/.aws/config;

echo "AWS_SECRET_ACCESS_KEY=$DEVELOP_SECRET_KEY" >> ~/.aws/config;

fi;

- |

for lambda_package_json in ~/lambdas/**/package.json;

do

filepath="$lambda_package_json";

cd ${filepath%/*};

npm install;

done

- npm install

deployment:

staging:

branch: develop

commands:

- ./zip.sh

- ./compareAndUpdate.sh

production:

branch: master

commands:

- ./zip.sh

- ./compareAndUpdate.sh

Of course in between this process one will bump onto small pitfalls which are vicious such as the creation of node_modules folders and bringing in node dependencies. We would have to go into too much detail to go through these, so if you find trouble in this, just send us a comment and we will get back to you!

Authentication / Credentials

In order for lambda to communicate with third party tools or even your own servers, it needs some kind of authentication.

For example, in our lambdas we push some data to pusher and would not feel safe if we committed our pusher credentials to github (even if we have private repositories). This holds true for all other SAAS tools we use.

There are many ways to hide authentication keys and push them to lambda, some of which are:

- using S3 files and hold secrets in there

- using an API to your servers which answers back with secrets

Since we run our servers on CoreOS with etcd, we decided to use etcd. At the start of each lambda we access our etcd server which replies with the lambda config for the function, which is then required in the lambda execution file.

To communicate with our etcd cluster we use the below nodejs script:

module.exports = {

fetch : (function (host, path, port) {

var http = require('http');

var assert = require('assert');

return new Promise(function (resolve, reject) {

var requestOpts = {

host: host,

path: path,

port: port

};

assert.ok(requestOpts.host !== undefined, "etcd error : Host is required!!!");

assert.ok(requestOpts.path !== undefined, "etcd error : Path is required!!!");

assert.ok(requestOpts.port !== undefined, "etcd error : Port is required!!!");

http.get(requestOpts, function (res) {

var body = '';

res.on('data', function (chunk) {

body += chunk;

});

res.on('end', function (data) {

var response = JSON.parse(body);

var config = JSON.parse(response.node.value);

for(var key in config) {

process.env[key] = config[key];

}

resolve(config);

});

res.on('error', function (error) {

reject(new Error('Failed to retrieve data from etcd'));

});

});

});

})

};

The config is pushed to our etcd servers using ansible tower.

Local Development

These tools are great but in order for developers to be efficient they need to be able to develop locally.

We have two ways to develop locally:

- Use the lambda-local npm module

- Use a simeple run.js file as below:

var AWS = require('aws-sdk'); AWS.config.update({ 'cat-name' : 'donald', 'cat-likes' : 'hugs', 'cat-hates' : 'cold water', ' }); var handler = require('./index.js').handler; var event = require('./events/kinesis.js'); var context = require('../PPH_modules/lambda_mock_context'); handler(event, context);

Using the second option we can easily simulate the way the lambda function runs on aws with specific configs in an external field, as per the etcd authentication mechanism described above.

Monitoring and alarms

Another critical aspect of production loads is knowing when things don’t go well either in terms of errors or when anomalies appearing.

Truth is, aws offers logs, on which it is close to impossible to find whatever you may be looking for.

However, hey, we have devoted so much time to lambdas and unfortunately we are missing this final area of interest. We are looking for tools that can integrate with our ElasticSearch kibana monitoring tool and are getting fairly close.

Stick with us and news on this will follow soon!

No Comment